Featured

The $52 Billion Question: Why 70% of AI Agent Deployments Fail (And 3 That Succeeded)

¶

Here’s a number that should stop any CIO cold: $52 billion.

That’s where the agentic AI market is headed by 2030, a wave of autonomous systems making decisions, executing workflows, and operating with minimal human intervention at every step. Boards are excited. Vendors are salivating. Budgets are being greenlit across industries from healthcare to retail to financial services.

There’s just one problem. Between 70% and 95% of AI agent deployments are failing. Right now. On your competitors’ infrastructure, and possibly your own.

Not failing quietly, either. Failing expensively. One analysis by ParallelLabs tracked $3.8 billion invested in generative AI pilots, most of which stalled or were quietly shelved within 18 months. A separate study of 127 enterprise implementations by AgentModeAI found that 73% fail completely, while only 27% survive long enough to generate meaningful returns. And Hypersense Software’s January 2026 production analysis puts the figure even higher, 88% of AI agents never reach production at all.

So what separates the projects that succeed from the ones hemorrhaging millions in pilot purgatory?

After analyzing data from dozens of enterprise deployments, three root-cause failures emerge with uncomfortable consistency: inadequate data governance, missing observability infrastructure, and a dangerously naive understanding of what “autonomy” actually means. Three fundamental flaws. Each one entirely preventable.

⚠ Three fundamental flaws kill most AI agent projects. The first two are well-documented. The third, which we’ll dissect in Section 3, surprised even veteran implementers.

Section 01

The AI Agent Failure Epidemic Is Worse Than Anyone’s Admitting

¶

The statistics alone don’t capture it. You need to feel the texture of this problem.

Enterprises are not running small, cautious pilots. They’re committing real capital, engineering teams, cloud infrastructure, licensing fees, integration work, to agentic systems that promise to automate complex, multi-step workflows. Customer service agents that handle escalations. Research agents that synthesize literature. Code agents that review pull requests and suggest architectural improvements.

Then, somewhere between proof-of-concept and production, something breaks. The agent hallucinates data it was supposed to retrieve from a CRM. It routes a customer complaint to the wrong team 30% of the time, but no one catches it for six weeks. It makes a compliance-relevant decision without logging why, and the audit team flags it two quarters later.

The LinkedIn CIO community’s December 2025 pulse analysis is blunt about this: ‘84% of Agentic AI projects fail because organisations are making a fundamental strategic error, they’re deploying agents without redesigning the workflows.’ Agents get bolted onto existing processes rather than integrated into redesigned ones. The result is sophisticated technology doing a mediocre job faster.

Meanwhile, ParallelLabs’ synthesis of MIT and McKinsey research suggests 95% of generative AI pilots fail or underperform, a figure encompassing not just outright failures but the even more dangerous category of deployments that appear to work while silently degrading in accuracy, compliance, or reliability.

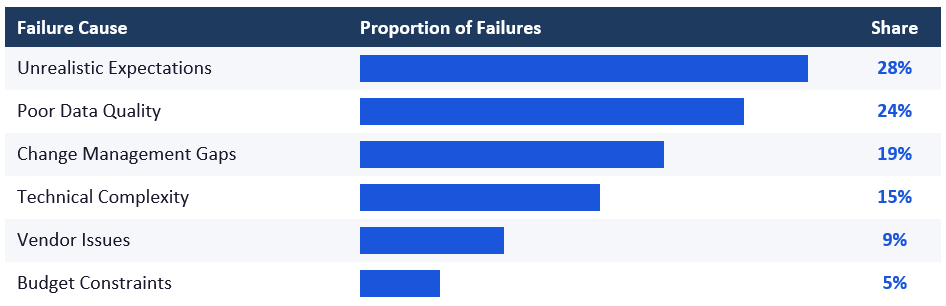

AgentModeAI’s analysis of 127 enterprise implementations identified six primary failure categories. The breakdown reveals exactly where attention and budget need to go:

Source: AgentModeAI Enterprise Deployment Analysis, August 2025 (n=127 implementations)

Notice what’s at the top. Not technical limitations. Not budget. Expectations and data quality account for more than half of all failures combined. That’s not a technology problem, it’s a strategy and infrastructure problem.

Three of those six categories map directly to the infrastructure flaws we’re going to dissect. If you’re planning a deployment, consider this your warning system.

Section 02

Flaw #1 — Inadequate Data Governance Is Quietly Killing Your Deployment

¶

Poor data quality drives 24% of AI agent failures. On its own, that number is alarming. In context, it’s catastrophic.

AI agents don’t just consume data the way a dashboard or analytics tool does. They act on it. They make decisions, trigger workflows, and execute transactions based on whatever information they’re fed. A bad dashboard shows you wrong numbers, frustrating, fixable. A bad AI agent sends your customer an incorrect refund, recommends the wrong drug dosage interaction to a clinician, or approves a loan application that should have been flagged.

The stakes of data governance failures scale with the autonomy level of the agent. And most enterprises deploying agents in 2025 and 2026 are dramatically underestimating that scaling effect.

What “inadequate data governance” actually looks like in practice

It starts with schema inconsistency. Your CRM was built in 2019. Your ERP has a different field definition for “customer ID.” Your data warehouse has been patched seventeen times by three different contractors. The agent trying to synthesize information across these systems isn’t just confused, it’s operating on false premises, with no mechanism to flag its own uncertainty.

Then there’s data freshness. Agentic systems operate in near-real-time, but enterprise data pipelines often lag by hours or days. An agent making inventory reorder decisions based on yesterday’s fulfillment data in a high-velocity SKU environment isn’t autonomous, it’s a liability dressed as automation.

Finally, there’s provenance. When an agent makes a consequential decision, can you trace exactly which data inputs drove that decision, at what timestamp, from what source? Most deployments can’t. That’s not just an audit problem. It’s a trust problem. As Kore.ai’s AI Agent Governance guide notes, decision provenance and behavioral monitoring are essential infrastructure, not optional add-ons.

AgentModeAI’s analysis of 50 failed enterprise cases found poor data quality at the center of nearly a quarter of failures, and those are only the ones that failed visibly. The more insidious failures are the ones where degraded data quality causes gradual output drift that no one catches until a downstream system breaks or a compliance review uncovers anomalies.

The governance fix that actually works

The enterprises that succeed treat data governance as a precondition for deployment, not an afterthought. Before any agent goes to production, they run structured audits: What data sources will this agent access? Who owns each source? What’s the refresh frequency? What happens when a query returns null or contradictory values?

This isn’t glamorous work. It doesn’t make for impressive demo videos. But the 27% of deployments achieving 312% ROI over two years share one consistent commonality: their data infrastructure was enterprise-ready before the agent was enterprise-deployed.

Build quality pipelines. Define data contracts between systems. Implement validation layers that flag anomalies before they reach the agent’s context window. Treat your data as the agent’s operating environment, because that’s exactly what it is.

Section 03

Flaw #2 — Missing Observability Means Flying Blind at 30,000 Feet

¶

You would never deploy a production database without monitoring. You’d never run a payment processing system without logging every transaction. So why are enterprises deploying autonomous AI agents, systems making real decisions with real consequences, without observability infrastructure?

They are. Widely. And it’s creating the kind of compounding, invisible failures that are very hard to diagnose and very expensive to clean up.

IBM’s research on AI agent observability frames the risk directly: without visibility into how agents operate, enterprises face compliance violations and operational failures that can go undetected until significant damage has been done. The problem isn’t just that things go wrong, it’s that you don’t know they’re going wrong.

Lumenova AI’s executive guide for CIOs and CTOs (February 2026) puts it plainly: “Autonomous agents create unexpected failure modes. Observability enables real-time anomaly detection.” That sounds obvious. The implementation reality is more complex than most teams anticipate.

Why observability for AI agents is fundamentally different

Traditional software monitoring tracks known failure modes. Did the API call return a 500 error? Did query execution time exceed threshold? These are binary, measurable, definable conditions.

AI agent failures are often probabilistic and contextual. The agent might be technically “working”, returning outputs, completing tasks, avoiding errors in the traditional sense, while drifting in accuracy, making subtly incorrect decisions, or operating outside its intended behavioral parameters. None of that shows up in a standard APM dashboard.

Lumenova’s research surfaces two specific manifestations of this problem: shadow agents and policy drift. Shadow agents emerge when deployed agents spawn sub-agents or make API calls to external services outside the monitored perimeter, a governance nightmare that’s surprisingly common in multi-agent architectures. Policy drift occurs when an agent’s behavior gradually diverges from its intended parameters over time, often due to distribution shift in input data. Without behavioral monitoring, you won’t catch either until a human notices something wrong, which could be weeks or months after the drift began.

There’s also the regulatory dimension. The EU AI Act, now in enforcement phase, places explicit requirements on high-risk AI systems for transparency, explainability, and audit trails. An AI agent making consequential decisions in healthcare, finance, or HR without full observability infrastructure isn’t just operationally risky, it’s potentially non-compliant. Kore.ai’s observability analysts are direct on this point: “The absence of agent monitoring is now one of the biggest technical and governance risks” in enterprise AI deployment.

What real observability looks like

Mature deployments instrument agents at three levels. First, decision logging, every significant decision the agent makes gets recorded with the inputs, model state, and reasoning chain that produced it. Second, behavioral monitoring, continuous tracking of output distributions to detect drift against baseline benchmarks. Third, integration auditing, complete visibility into every external system the agent touches, with access logs tied to specific agent actions and timestamps.

This isn’t cheap infrastructure to build. But it’s cheap compared to a compliance violation, a customer trust crisis, or an operational failure that takes weeks to diagnose after the fact.

The EU AI Act isn’t softening. Enterprise regulatory scrutiny isn’t decreasing. Observability isn’t optional anymore, it’s table stakes for any serious deployment in 2026.

Section 04

Flaw #3 — The Autonomy Myth Is the Most Expensive Mistake in Enterprise AI

¶

Here’s the pitch that’s gotten enterprises into trouble: “Deploy our agent and it handles everything autonomously. Just set it and forget it.”

It’s seductive. It’s the promise that justifies the budget. And according to AgentModeAI’s enterprise data, it’s responsible for 28% of all AI agent deployment failures, the single largest failure category in the dataset.

Unrealistic autonomy expectations don’t just cause project failure. They cause project failure after significant investment, which is worse. Teams build deployment architectures around the assumption that the agent will handle edge cases, ambiguous situations, and novel inputs the way a skilled human operator would. When the agent encounters something outside its training distribution, which happens constantly in real enterprise environments, the project collapses without the human escalation paths needed to catch it.

The LinkedIn CIO Pulse diagnosed this precisely: organisations are “deploying agents without redesigning the workflows.” That phrase deserves unpacking, because it captures the fundamental misunderstanding at the heart of most failed deployments.

Agents augment redesigned workflows. They don’t automate broken ones.

When companies bolt an AI agent onto an existing workflow, they’re making an implicit assumption: that the workflow is already optimized for automation. It almost never is. Human workflows are built around human judgment, human exception handling, and human contextual awareness. They contain thousands of micro-decisions, when to escalate, when to ask for clarification, when a situation is unusual enough to warrant a different approach, that humans make instinctively without even noticing.

Agents can’t make those decisions without explicit design. And most deployments don’t provide it.

The result is predictable: the agent handles the 70% of cases that are simple and well-defined, fails on the 30% that require judgment, and because there’s no structured escalation path, those failures either go unresolved or require frantic human intervention that defeats the purpose of automation entirely.

The spectrum model: where autonomy actually works

Hypersense Software’s January 2026 analysis of production deployment data reveals that the most successful agentic systems don’t aim for full autonomy, they operate on a carefully designed spectrum. At one end: fully supervised agents that recommend actions for human approval. At the other: fully autonomous agents for narrow, well-defined, low-stakes tasks with full observability. In between: a range of human-in-the-loop configurations calibrated to task criticality and agent confidence levels.

The 27% of deployments generating 312% ROI don’t succeed because they automated more. They succeed because they automated correctly, identifying which tasks benefit from automation, designing explicit escalation paths for everything else, and building feedback loops that improve agent performance over time based on human corrections.

Workflow redesign isn’t a concession. It’s a precondition. Before any agent goes to production, the team needs a map of every decision point in the workflow, a classification of which decisions are automation-ready and which require human judgment, and a clear protocol for handling the boundary cases between them.

Failure Flaws vs. Success Fixes

| Flaw | Impact | Fix | Success Metric |

| Inadequate Data Governance | 24% of all failures | Audit data sources; build quality pipelines | 312% ROI within 2 years |

| No Observability | Compliance violations; silent drift | Real-time monitoring & decision logging | Anomalies caught before damage occurs |

| Autonomy Myths | 28% of all failures | Workflow redesign; explicit escalation paths | 40% resolution gain; 30% productivity lift |

Sources: AgentModeAI (2025), Lumenova AI (Feb 2026), LinkedIn CIO Pulse (Dec 2025)

Section 05

Three Deployments That Got It Right

¶

Numbers and frameworks only go so far. The 27% that succeed have something to teach the 73% that don’t, and the lessons are more specific than “do better data governance.”

Here’s what production success actually looked like in three real deployments.

Case Study 1 | Genentech — Research Automation at Scale

📊 Result: 90% reduction in research synthesis timelines

Genentech’s research teams were spending enormous time synthesizing scientific literature, critical to drug discovery timelines but inherently time-consuming. Literature review, cross-referencing studies, identifying relevant prior art, flagging contradictory findings. Skilled scientists doing work that felt like it should be automatable.

Rather than building a single “research agent” and hoping it would figure things out, Genentech designed a multi-agent architecture with explicit specialization. One agent class handled retrieval and initial synthesis. Another performed cross-referencing and contradiction detection. A third generated summaries calibrated for different audiences, senior researchers vs. regulatory affairs teams. Human researchers remained in the loop for final assessment.

Critically, the data governance foundation was built before the agents were deployed. Research databases were audited for consistency, access permissions were formalized, and retrieval outputs were validated against known ground-truth studies before the system went live.

Research synthesis timelines improved by approximately 90%, not because the agent was faster than a human, but because it could run parallel across dozens of literature threads simultaneously while human researchers focused their time on the judgment-intensive analysis that actually requires scientific expertise.

Case Study 2 | Amazon Q — Developer Productivity at Enterprise Scale

📊 Result: +30% developer productivity across enterprise codebase

Developer productivity tools have always promised more than they’ve delivered. Code completion is useful. But the real productivity bottleneck isn’t writing code, it’s navigating codebases, understanding legacy systems, and context-switching between tasks.

Amazon Q was built around a specific, bounded use case: helping developers understand and work within Amazon’s own massive internal codebase. Rather than trying to be an autonomous coding agent, it was designed as an intelligent assistant with deep integration into existing developer workflows, code review, documentation, onboarding, and security scanning.

The observability infrastructure was built into the product architecture from the start. Every interaction was logged, every suggestion tracked against eventual developer acceptance or rejection, and those signals fed continuous improvement loops. The team could see, in near-real-time, where the agent was adding value and where it was generating noise.

Developer productivity metrics improved by approximately 30%, a substantial gain in an environment where developers are expensive and their attention is genuinely scarce. Because observability was built in, the team could identify failure modes early, improve the agent’s behavior systematically, and maintain performance quality as usage scaled.

Case Study 3 | Enterprise Retail — Customer Service Resolution

📊 Result: 40% improvement in first-contact resolution rates

A major enterprise retailer was struggling with customer service at scale. Resolution rates were inconsistent, escalation paths were poorly defined, and human agents spent significant time on repetitive, low-judgment inquiries that created bottlenecks for the complex cases requiring real expertise.

The AI agent deployment was preceded by a complete workflow redesign, exactly the step that 84% of failed deployments skip. The team mapped every customer inquiry type, classified each by complexity and judgment requirements, and identified the specific subset where agent handling was genuinely appropriate: simple order tracking, standard return initiations, shipping status updates with known resolution protocols.

Everything outside that scope triggered an immediate, smooth escalation to a human agent, with full context transferred so the customer didn’t have to repeat themselves. The AI agent didn’t try to handle edge cases. It was explicitly designed not to.

Data governance was addressed through integration audits before launch: every system the agent would query (order management, inventory, logistics) was validated for data freshness and consistency. Observability dashboards tracked resolution rates, escalation frequency, and customer satisfaction scores in real time.

First-contact resolution rates improved by 40%. Not because the agent was handling more volume, it was handling the right volume. Human agents, freed from repetitive inquiries, achieved better outcomes on the complex escalations that actually required their skills.

The workflow redesign took six weeks. The deployment took four. That sequencing, governance and design before deployment, is the pattern that separates this case from the 73% that failed.

Conclusion

The Failure Avoidance Checklist

¶

The three success cases share a common architecture. Not the technology stack, the approach. Before you commit another dollar to an agentic AI deployment, run this checklist.

- Audit data governance before writing a single line of agent code. Map every data source the agent will access. Validate freshness, consistency, and provenance. Define what happens when data is missing, stale, or contradictory.

- Define success metrics before deployment. Not “the agent works”, measurable outcomes. Resolution rates. Accuracy thresholds. Latency targets. Escalation frequency.

- Redesign the workflow, not just the tool. Map every decision point in the target workflow. Classify each by automation-readiness. Design explicit escalation paths for judgment-intensive cases.

- Build observability infrastructure in parallel with agent development. Decision logging, behavioral monitoring, integration auditing. These are foundational infrastructure, not post-deployment additions.

- Start with a conservative autonomy level. Default to human-in-the-loop for consequential decisions. Expand autonomy only when performance data justifies it.

- Define and document escalation protocols. What triggers escalation? Who receives it? What context transfers? Every deployment needs explicit answers before go-live.

- Run EU AI Act compliance review before production. If your agent touches high-risk domains, review against applicable regulatory requirements now. Retrofitting compliance is dramatically more expensive than building it in.

- Establish performance baselines in the first 30 days. Set measurement periods. Track output distributions. Define what “drift” looks like. Plan your first formal review at 30 days post-launch.

- Create feedback loops between agent outputs and human corrections. Every time a human overrides or escalates an agent decision, that’s a training signal. Build systems to capture those signals systematically.

- Plan for iteration, not perfection. The 27% that generate 312% ROI don’t launch perfect agents. They launch instrumented agents, systems designed to improve based on real-world performance data.

The $52 Billion Question Has a $0 Answer

The uncomfortable truth about the AI agent failure epidemic: most of these projects aren’t failing because the technology doesn’t work. They’re failing because the organizations deploying the technology aren’t ready for what autonomous systems actually require.

Data governance isn’t a technology problem. Observability isn’t a vendor problem. Unrealistic autonomy expectations aren’t an AI problem. They’re organizational problems, and they have organizational solutions.

The market is heading to $52 billion by 2030. The enterprises that capture that value won’t necessarily be the ones with the biggest AI budgets or the most sophisticated models. They’ll be the ones that did the unglamorous work first: auditing their data, building their observability infrastructure, redesigning their workflows, and calibrating their autonomy expectations against what production deployments can actually deliver.

The 27% already know this. They’re building ROI on it right now.

The question is whether your next deployment joins that 27%, or the other 73% quietly paying tuition.