Meta’s AWS Graviton5 Deal: The CPU-Dense Agent Stack Nobody Explained

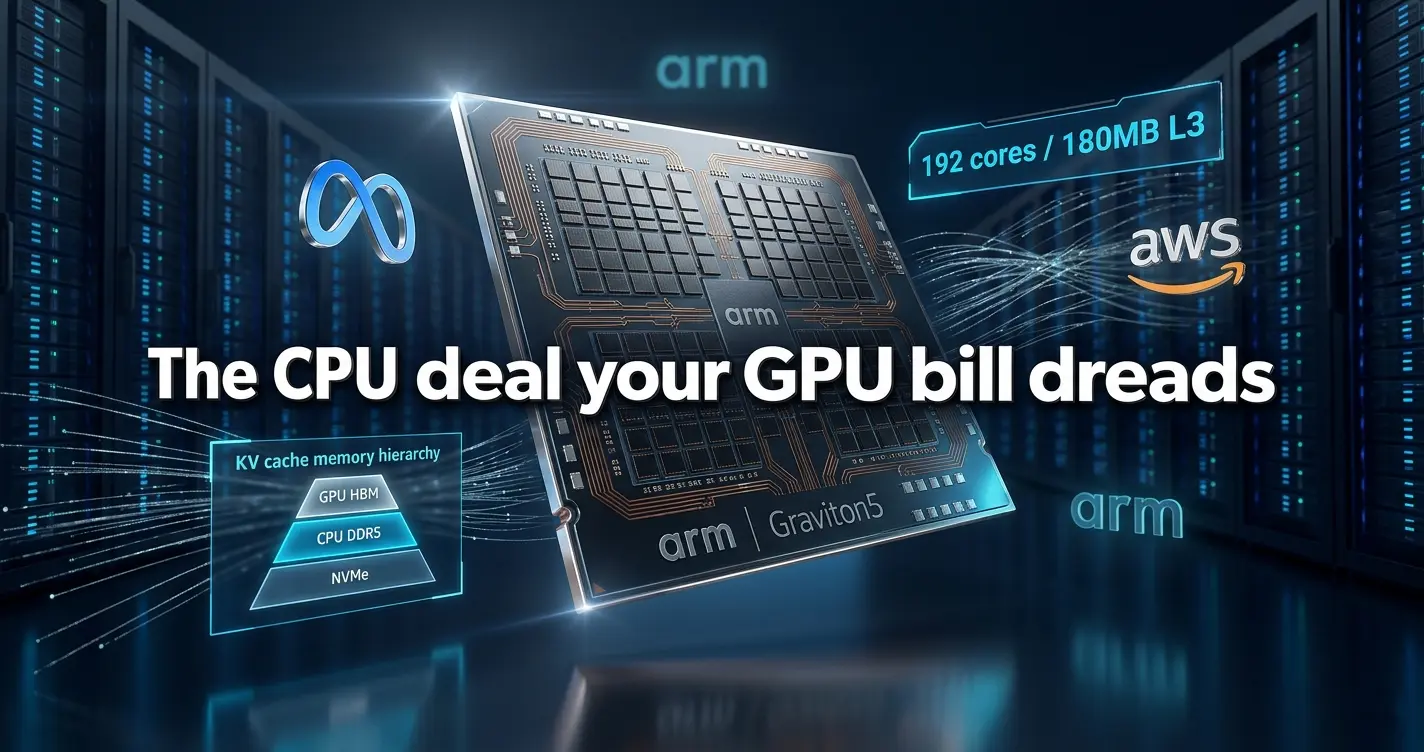

Tens of millions of 192-core Arm chips. A multi-billion-dollar, multi-year agreement. Every outlet covered the price tag. Almost none of them explained the physics that make it necessary, or the silicon roadmap it quietly validates.

What the Press Release Buried

When Meta announced its agreement with AWS to deploy tens of millions of Graviton5 cores for agentic AI workloads, the coverage pattern was predictable. Bloomberg and Reuters counted the zeros. TechCrunch called it the “end of GPU monoculture.” Hacker News debated whether Arm chips could ever match NVIDIA throughput.

Nobody explained why Meta actually needs 192-core CPUs at planetary scale to run agents. That gap matters, because the reason is technical, structural, and points directly at where the next $100 billion in AI infrastructure spend is headed.

This deal is not supply-chain hedging. It is an orchestration-first scaling strategy, one that treats the GPU as a narrow compute accelerator and the CPU cluster as the actual state machine holding multi-agent sessions together. To understand why, you have to start with the memory problem nobody is talking about.

What Actually Happened, With Primary Sources

On April 24, 2026, Meta and AWS formalized a multi-year, multi-billion-dollar agreement in which Meta commits to deploying AWS Graviton5 processors at scale to host agentic AI workloads. The deal gives Meta access to tens of millions of Graviton5 cores across AWS’s global infrastructure.

Reuters confirmed the deal’s structure as a long-term commitment rather than a spot purchase. TechCrunch noted it as an unusual move for a company with its own NVIDIA GPU clusters and a growing custom silicon program. What neither outlet explained is why the timing aligns precisely with the launch of Arm’s AGI CPU, the chip that shares its Neoverse-V3 DNA with Graviton5.

Graviton5, now powering M9g EC2 instances, is built on a 3nm-class process. It packs 192 Neoverse-V3 cores and roughly 180 to 192 MB of L3 cache, a 5x increase over the prior generation. DDR5-8800 memory support and a redesigned inter-core layout cut intra-chip latency by 33%.

The Numbers Every Engineer Should Have

AWS claims Graviton5-based M9g instances deliver 25% higher performance versus their predecessors. That number is real but deliberately modest in isolation. The structural story is in the cache and memory bandwidth figures.

Graviton5 supports DDR5-8800 across multiple channels, pushing aggregate memory bandwidth into high-end territory. Arm’s own AGI CPU, the sibling chip that shares the same core design, reaches 800+ GB/s across 12 DDR5-8800 memory channels, with 136 Neoverse-V3 cores at 300W TDP. These are not consumer metrics. They are designed for one job: managing the memory traffic generated by tens of thousands of concurrent AI agent sessions.

Graviton-based instances already account for roughly half of all new CPUs added to AWS over the past three years. This deal accelerates that trajectory with one of the largest single customers in the cloud industry.

For context on capex: Alphabet, Amazon, and Meta are collectively spending close to $400 billion on AI infrastructure in 2026, with Meta committed to tens of billions across both GPU and CPU-centric stacks. The Graviton5 deal sits within that broader capital allocation, not outside it.

KV Cache: The Problem That Makes This Deal Make Sense

This is what mainstream coverage skipped entirely. Every large language model stores a key-value (KV) cache during inference. For each active session, the model maintains key and value matrices for every attention layer across every token it has processed. At short contexts, this fits comfortably in GPU HBM. At 128,000 tokens, it does not.

A single user session running Llama-3 70B at 128k tokens generates approximately 40 GB of KV cache in 16-bit precision. An H100 GPU carries 80 GB of HBM. One user’s context fills half a GPU’s memory budget. At scale, this is not a tuning problem. It is a physics problem.

The solution is tiered memory management: the GPU retains only the active KV vectors for the current forward pass. The CPU cluster, armed with DDR5-8800 and a massive L3 cache, holds the full session state and feeds slices back to the GPU on demand. Systems like LMCache and vLLM-style KV offload stacks already implement this architecture in production.

Production benchmarks from storage-attached offload systems show first-token-time reductions of up to 14x for full 131k-token contexts. Graviton5’s 180+ MB L3 cache is purpose-built to sit at the top of this memory hierarchy, absorbing the hot portion of the KV pool before traffic spills to DDR5 or NVMe.

This is why 192 cores per die matters more than raw clock speed. Each core needs enough local cache bandwidth to serve slice requests from multiple concurrent GPU inference threads without creating a bottleneck at the CPU-to-GPU interconnect. The chip design is explicitly shaped around this access pattern.

“At 40 GB per 128k token context, you’re effectively running a distributed memory pool at line rate. We’re already bumping up against memory-controller and PCIe switching bottlenecks.”

Anonymous Meta contractor, via SNIA storage conference notes

Nitro System: More Than a Security Buzzword

Coverage of this deal mentions the AWS Nitro System as a footnote about security. It deserves considerably more attention, especially for teams building agentic AI with compliance obligations.

Nitro provides hardware-isolated execution environments for individual agent sessions. Each session runs inside a Nitro-anchored VM with API-level monitoring and isolation mechanisms that have been subject to formal verification-style analysis. NCC Group’s independent security analysis concludes the Nitro architecture credibly supports its isolation claims, with the important caveat that formal verification covers the specified security model, not the application logic running inside it.

For enterprise deployments, this matters for an upcoming reason. EU regulatory proposals targeting autonomous agent accountability are moving toward requiring hardware-isolated execution environments for agents that take consequential actions. A Nitro-backed stack gives compliance teams something concrete to point to. Enterprises building agent workflows today should audit whether their current infrastructure can make the same claim.

The Bridge Nobody Drew: Graviton5 to Meta’s AGI CPU

The most under-reported angle of this deal is architectural. Graviton5 and Arm’s newly launched AGI CPU share the same Neoverse-V3 core design. Both run at 3nm. Both are optimized for the same memory and interconnect patterns.

This means software Meta compiles and optimizes for Graviton5 today runs with minimal modification on Arm’s AGI CPU once Meta deploys it in its own data centers. The AWS deployment is not just a capacity play. It is a pre-production validation environment at scale, one that generates real workload telemetry and real software maturity before Meta’s private silicon ramps.

This is what “vertical integration proxy” actually means in practice. Meta captures AWS’s silicon R&D investment and operational scale while retaining the long-term capex efficiency of owning its own compute. When the AGI CPU is production-ready in Meta’s facilities, the migration path will be near-binary. The engineering team running on Graviton5 today is effectively the bring-up team for Meta’s next-generation CPU fleet.

The strategic move also hedges against NVIDIA’s margin structure. NVIDIA runs at roughly 85% gross margins on its GPU products. Every dollar of agent orchestration shifted to an Arm CPU cluster is a dollar removed from that margin pool. Meta’s dual-stack strategy is an explicit response to that math, not an ideological stance on CPU versus GPU architectures.

Expert Voices: Four Perspectives Worth Having

The operational skeptic

Arun Kumar, a public cloud AI infrastructure architect whose observations circulate among practitioners on LinkedIn, frames the core risk clearly: “Meta’s move makes sense operationally, but it’s a bet on Arm-at-scale, not a guaranteed win. The real risk is debugging global agent coordination at tens of millions of CPU cores. This is more distributed-systems hell than chip marketing.”

The latency-tail concern

Researchers close to FAIR’s systems work point to a problem the deal’s announcements sidestep. Even with 10 million Graviton cores available, tool-call jitter in long-running agent sessions will dominate user experience if the agent runtime is not co-optimized with the Nitro offload stack. The hardware is necessary but not sufficient.

The semiconductor analyst view

Dan Friedman, a semiconductor analyst at Moor Insights and Strategy, offers a grounding perspective: “The 25% performance uplift is modest against NVIDIA’s Blackwell-Vera ecosystem. The real question is whether Meta can match GPU-only training and inference throughput at this scale.” Graviton5’s headline benchmark numbers are real, but they compare CPU-to-CPU, not CPU-to-GPU on inference tasks.

The security qualifier

NCC Group’s Philip Plückebaum, whose team produced the Nitro architecture analysis, adds the necessary caveat: “Formal verification only covers the specified security model. Malicious agent logic can still exploit software-side logic gaps, even if the hardware is sound.” Security teams should treat Nitro isolation as a floor, not a ceiling.

Winners, Losers, and Where Capital Is Moving

Who benefits directly

AWS and Arm are the clearest winners. AWS locks in one of the world’s largest AI spenders as a multi-year Graviton anchor customer. Arm’s AGI CPU acquires a flagship validation deployment through Meta, converting the chip from an interesting architectural exercise into a production-grade reference design. Orchestration-layer companies, specifically those building KV-cache management, distributed session state, and agent runtime infrastructure, find their market thesis confirmed by one of the largest infrastructure commitments in AI history.

Who faces structural pressure

NVIDIA-only stacks face a slow but real margin problem. Hyperscalers increasingly categorize NVIDIA’s per-GPU pricing as a tax on their AI capital spending. Each dollar of agent orchestration running on Arm CPUs does not go to Blackwell or Vera-Rubin. NVIDIA is responding with its own Arm-based Vera CPU within the Vera-Rubin platform, which is an acknowledgment of the trend rather than a rebuttal of it.

Traditional SaaS vendors treating AI as a feature addition face a different risk. Platforms that embed millions of autonomous agents at the infrastructure layer operate at a speed and cost structure that bolt-on AI cannot match.

Investment signal

Venture capital is moving away from foundation model funding toward “KV-cache-first” and “orchestration-first” infrastructure plays. AI-optimized storage, low-latency CXL memory stacks, and agent runtime platforms are attracting capital that would have gone to model training two years ago. The Meta-AWS deal is the largest single data point validating that shift.

Reality Check: What Is Real, What Is Not

The hardware is real. Graviton5 is shipping in M9g EC2 instances with confirmed double core-density and the 5x L3 cache expansion. KV cache offloading works in production today across multiple open-source inference stacks. Nitro-based isolation is in active use for AI workloads with independent security verification behind it.

What remains speculative: sustained deployment of tens of millions of cores at the performance levels marketing materials suggest. Inter-node coordination at that scale is a distributed systems problem that chip specs do not solve. Long-tail latency in KV offload lookups and Nitro isolation checks can still break session-level SLAs even when the average-case numbers are excellent.

The software coherence challenge is the honest limiting factor. Keeping agent state consistent across millions of CPU cores, multiple GPU clusters, and Nitro-isolated VMs requires orchestration infrastructure that does not yet exist off the shelf. Meta will have to build it, and the build timeline is not public.

The “bridge architecture” thesis connecting Graviton5 to Meta’s AGI CPU ramp is logical and technically sound, but it is an inference from public chip specifications and known relationships between AWS and Arm. Meta has not publicly confirmed this roadmap connection. Engineers should treat it as a well-grounded hypothesis, not a disclosed plan.

What You Should Do With This Information

For infrastructure engineers and ML engineers

Audit your current LLM inference stack for KV cache memory usage at your production context lengths. If you are running 32k tokens or more per session and have not implemented CPU-backed KV offloading, you are leaving latency improvements on the table. Evaluate LMCache or vLLM’s offload configurations against your workload profile. Run the numbers on GPU HBM utilization per session before your next capacity planning cycle.

If your team is evaluating Graviton5-based EC2 instances, prioritize the M9g series and profile specifically for memory bandwidth saturation patterns, not just raw throughput. The cache hierarchy behavior under concurrent agent workloads is where Graviton5 differentiates, and standard benchmarks will not show it.

For CTOs and engineering leaders

The Meta-AWS deal signals that the next competitive layer in agentic AI is orchestration infrastructure, not model quality alone. If you are building agent-facing products and your architecture treats the CPU as a coordination afterthought rather than a primary compute tier, review that assumption now. The teams that win the next infrastructure cycle are the ones designing explicitly for CPU-GPU memory tiering and distributed state management.

On the compliance side: if your agents take consequential actions in regulated environments, map your current execution environment against what Nitro-anchored isolation provides. EU agent accountability requirements are drafting now. Getting ahead of hardware isolation requirements before they are mandated is cheaper than retrofitting later.

Frequently Asked Questions

KV cache offloading moves the key-value attention matrices generated during LLM inference from GPU HBM into CPU DRAM or NVMe storage. At long context lengths (32k tokens and beyond), the KV cache for a single user session exceeds what fits in GPU memory. Offloading to a CPU cluster with fast DDR5 and large L3 cache allows the GPU to serve far more concurrent sessions. Systems implementing this correctly see first-token latency drop by up to 14x for full 128k-token contexts, which is the difference between a usable agent experience and a timeout.

Graviton5’s advantage is architectural density: 192 Neoverse-V3 cores per die at 3nm versus AMD EPYC Genoa’s 96 Zen 4 cores and Intel’s 60-core Granite Rapids lineup. More relevant to AI orchestration, Graviton5’s 180+ MB L3 cache gives it more on-chip memory per die than either competitor, which matters specifically for serving KV cache slices with low access latency. On raw floating-point throughput for matrix operations, dedicated GPU accelerators still dominate. Graviton5 wins on memory hierarchy depth and core density for coordination-heavy, memory-intensive agent tasks.

Modern AI agents do far more than run a single forward pass. They manage conversation state, call external tools, parse API responses, route between specialized sub-models, and maintain session context across multiple turns. Most of that work is sequential, branchy, and memory-intensive rather than parallel and compute-intensive. GPUs excel at the matrix multiplications inside the model. CPUs handle everything around them: orchestrating the session, managing the KV cache, executing tool calls, and maintaining distributed agent state across concurrent sessions. At scale, the CPU layer is where throughput actually breaks down first.

AWS Nitro is a dedicated hardware and software stack that offloads virtualization functions from the main CPU onto purpose-built Nitro cards. This gives each EC2 instance hardware-level isolation from neighbors, with API-monitored I/O and a formally analyzed security boundary. For agentic AI, Nitro means each agent session can run in an isolated execution environment with auditable I/O, which satisfies a requirement that EU-style agent accountability regulations are moving toward mandating. NCC Group’s independent security review confirmed Nitro’s architecture supports its isolation claims, with the caveat that it covers the hardware boundary, not the application logic inside it.

Not for training, and not for inference of the largest frontier models. NVIDIA’s Blackwell and Vera-Rubin platforms remain the fastest available hardware for these workloads. What the deal does signal is that hyperscalers are unwilling to run their entire AI compute stack on NVIDIA silicon at NVIDIA’s margin structure. Agent orchestration, KV cache management, and session state maintenance are workloads that fit CPUs better than GPUs anyway, so the bifurcation is rational rather than ideological. NVIDIA’s response, shipping an Arm-based CPU in its Vera-Rubin platform, shows the company recognizes this boundary is real.

Arm’s AGI CPU is the company’s first full data-center processor, featuring 136 Neoverse-V3 cores at 3nm, 12 DDR5-8800 memory channels, and over 800 GB/s of aggregate memory bandwidth at 300W TDP. It shares the same core microarchitecture as Graviton5, meaning software optimized for one runs efficiently on the other. Meta is believed to be among the anchor customers for this chip. By deploying Graviton5 on AWS now, Meta’s software teams work in an environment that is architecturally near-identical to what they will run in Meta’s own data centers once the AGI CPU ramps.

Meta and AWS have described the agreement as multi-year and multi-billion-dollar without releasing specific contract figures. Given that Graviton5 instances are priced at commercial EC2 rates and Meta is committing tens of millions of cores over multiple years, analyst estimates place the total contract value in the range of several billion dollars, consistent with the scale of Meta’s broader AI capex program. The deal is structured as a committed deployment agreement rather than a spot purchase, which gives both sides revenue and capacity predictability across the contract term.

The Meta-AWS Graviton5 deal is best read as infrastructure documentation: a public record of where memory physics, silicon roadmaps, and agent architectures are converging. The KV cache problem is real, it scales quadratically with context length, and CPUs with large cache hierarchies are the correct tool for managing it. Graviton5 is the right chip for this layer of the stack. The deal’s scale reflects how many concurrent agent sessions Meta is planning to run, not how many GPU clusters it is replacing.

The forward-looking read: as context windows grow and agent sessions extend to hours or days rather than seconds, the CPU memory management layer becomes more valuable, not less. Teams that build their orchestration infrastructure around this reality in 2026 will have a structural advantage over teams that treat the CPU tier as an afterthought. The next competitive differentiator in agentic AI is not which model you use. It is how efficiently your infrastructure manages the state around it.